Cloud Video Streaming Without third-party Platforms

Live video is widely used across news, events, sports, corporate communications, product launches, and industrial scenarios. These use cases share the same operational requirement: the broadcast has to reach viewers predictably, even when the audience grows quickly, the uplink becomes unstable, or the stream needs to be delivered through multiple channels at the same time.

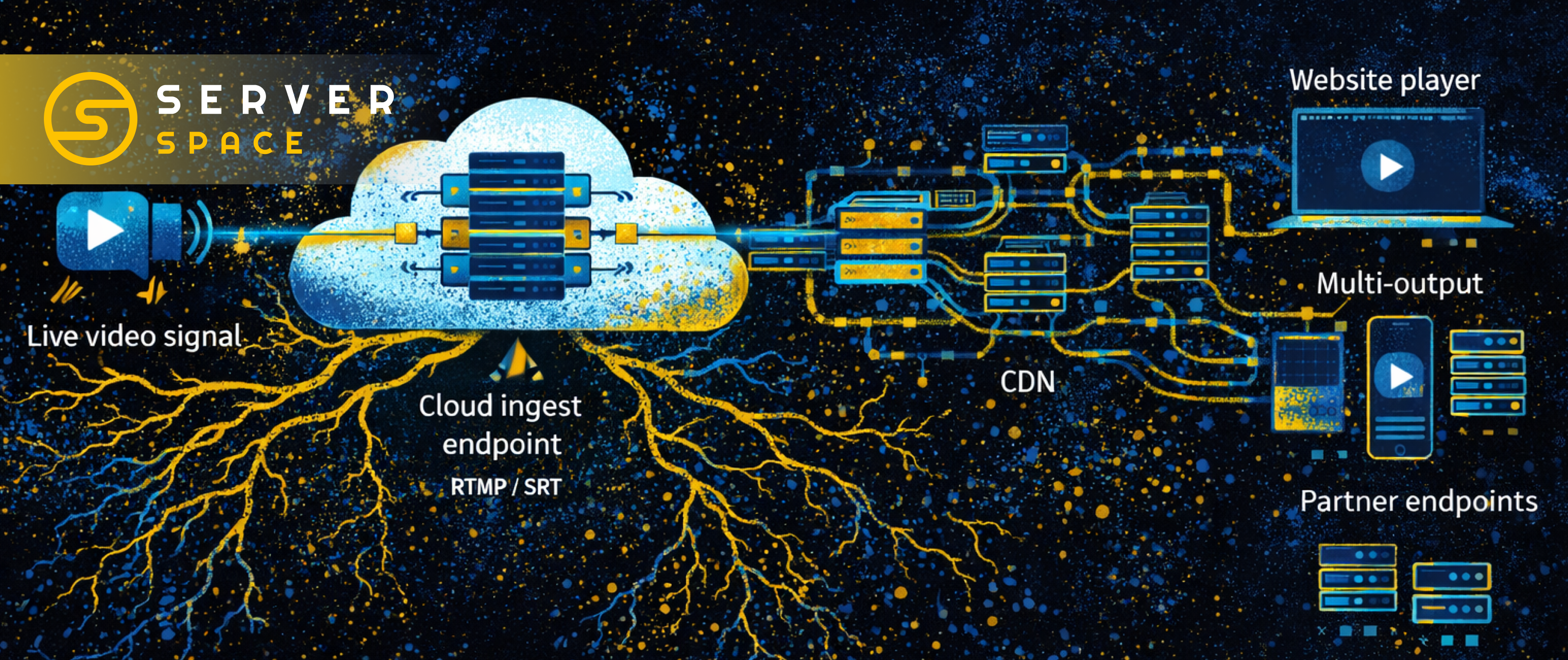

Scope: live streaming transport in the cloud (ingest, packaging, CDN-backed delivery), multi-output distribution, protocol choices (RTMP/SRT, HLS/LL-HLS), baseline infrastructure requirements, and practical criteria for selecting a cloud live streaming service.

Live streaming infrastructure is typically built around a transport layer: ingest, processing, delivery, and scaling. This approach supports “send the stream once, deliver it everywhere,” and it is increasingly delivered as a managed cloud service rather than a custom deployment per project.

Not a video platform

“Streaming” is often associated with platform features such as landing pages, chat, registration, moderation, and built-in players. Those features are part of a product layer. The underlying technical problem is different: receiving a live signal, keeping it stable, and delivering it to viewers at scale.

It helps to separate two components:

- A platform layer (interfaces and audience-facing features).

- A transport layer (ingest, delivery, scaling, redundancy).

The transport layer is the foundation behind most cloud-based video streaming deployments. It is also the part that determines whether a broadcast is actually watchable under real load.

From ingest to playback

Transport streaming is usually implemented as a three-step chain. This is the basis for live streaming delivery in the cloud and for hosting a streaming backend as part of your infrastructure.

Ingest

You send a live stream to a cloud endpoint once, from wherever you have connectivity. Common ingest protocols include RTMP and SRT. RTMP is widely used because it is supported by many tools and workflows. SRT is often preferred when the uplink is unstable, because it is designed to handle packet loss and jitter more gracefully.

Delivery to viewers

The service packages the stream for playback and distributes it in delivery formats such as HLS or LL-HLS. These formats work well in browsers and scale effectively through a CDN. In operational terms, the packaging and delivery layer is where the difference appears between a stream that works in a small test and a stream that stays stable during a peak audience.

Multi-output

The same ingest stream can be distributed to multiple destinations: a website player, a mobile app, partner endpoints, or other channels. This reduces manual duplication and provides alternate paths if one destination has issues or becomes unavailable.

Protocol and format overview

The table below summarizes common choices for ingest and delivery. Exact behavior depends on encoder settings, player implementation, and network conditions, but these are the typical selection criteria.

| Layer | Protocol / format | What it is used for | Typical reason to choose it | Trade-offs |

|---|---|---|---|---|

| Ingest | RTMP | Send live video from an encoder (OBS and similar) to the cloud endpoint | Broad tooling support and simple workflows | Less resilient on unstable uplinks; depends heavily on network quality |

| Ingest | SRT | Send live video over links with packet loss/jitter while keeping the stream stable | Better tolerance to loss and jitter (common for field production and mobile uplinks) | More parameters to tune; encoder/player support varies by toolchain |

| Delivery | HLS | Segmented playback format delivered via HTTP and CDN | Scales well and works broadly in browsers and devices | Higher end-to-end latency than low-latency modes |

| Delivery | LL-HLS | Low-latency variant of HLS with shorter segments/partials | Lower and more predictable latency while retaining HLS-style distribution | More sensitive to player/CDN configuration; operational tuning matters |

How live streaming developed

Online live video began as an experiment in the 1990s, when consumer internet connections and encoding efficiency were limiting factors. As bandwidth improved and codecs became more practical, live video turned into a common format: start a broadcast and viewers can watch from a wide range of devices.

Early adoption was driven by communities where immediacy matters more than polished production. Gaming and esports normalized real-time commentary and audience interaction. Other industries also adopted live formats early, including adult entertainment, where interactivity was easier to monetize than passive viewing.

A widely cited milestone was Justin Kan’s continuous life stream in 2007, which evolved into Justin.tv and later influenced the development of Twitch. YouTube expanded live video into mainstream usage, and the format moved quickly beyond gaming into education, fitness, music, business events, and similar scenarios.

Why live streaming moved to the cloud

Live streaming tends to fail at the worst moment: when viewers are already watching and the broadcast is in progress. Teams adopt cloud streaming architectures primarily for operational reasons rather than novelty.

- Audience spikes: a broadcast can move from a small audience to several times the expected load with little warning.

- Geography: viewers far from the origin are more exposed to delay, buffering, and quality drops if delivery is not distributed.

- Reliability requirements: some broadcasts cannot tolerate downtime during the event window.

- Scaling without rebuilding the stack: delivery infrastructure designed for growth reduces the need for ad hoc changes under pressure.

This shift also reframes streaming as a modular cloud video service: a transport and delivery layer that can be integrated into a product, without rebuilding distribution each time traffic changes.

Use cases where a transport layer is the priority

Transport-focused streaming fits scenarios where delivery reliability and distribution control matter more than platform features.

- Media, broadcasters, production, and news: ingest from remote locations and deliver reliably to viewers or partners while keeping control over the player and distribution strategy.

- Sports, events, community broadcasts, cultural and religious streams: one ingest stream delivered through multiple viewing points, stable behavior during peaks, and short deployment timelines.

- Corporate communications and training: controlled distribution (domain, access policy, viewing environment). Platform features such as chat, registration, and polls are often handled separately.

- IoT, drones, robotics, video monitoring, and industrial use cases: delivery to operators over inconsistent uplinks, with the option to distribute, record, or forward the stream.

- E-commerce and brand broadcasts: peak handling and reduced dependence on third-party platform rules, where outcomes are strongly affected by production and format design.

The same transport model also applies to audio streaming. Music streams, talk formats, and continuous programming typically need stable ingest, predictable delivery, and scaling behavior similar to live video workflows.

What to check when selecting cloud streaming transport

If the service is used primarily as a transport layer, a short checklist helps keep evaluation practical.

- Protocol support: ingest and delivery formats required by your workflow.

- Multi-output: ability to distribute one ingest stream to multiple destinations.

- Peak behavior: stable performance under audience growth, not only at low traffic.

- Latency control: predictable latency and consistent playback behavior, not minimum theoretical numbers.

- Security and access control: viewing restrictions, baseline protection, and logging.

- Pricing clarity: what is billed by default and what counts as an add-on.

Operational “surface area” also matters. Some teams want only delivery endpoints. Others need the player page, API, and stream endpoints under the same domain, or they want hosting for the surrounding application stack. If the workflow includes replays and assets, it is common to keep on-demand media in object storage while using CDN-backed delivery for both live and recorded playback.

Baseline infrastructure requirements for a stable stream

Exact requirements depend on codec settings, resolution, and the number of outputs, but teams typically plan capacity so the stream is not running at the edge of available resources.

- Compute: 2–4 vCPU and 4–8 GB RAM is a typical starting range for a single stream pipeline.

- Storage: NVMe-class disks are helpful when recording, segmenting, or working with video fragments in real time.

- Network: bandwidth becomes a primary constraint as audiences and geography expand, and it often matters as much as CPU.

When building on raw infrastructure, the decision usually becomes whether to run a dedicated streaming VPS or to rely on a managed transport layer. Both approaches can be viable. The consistent requirement is leaving enough headroom so moderate spikes do not translate into quality degradation.

Where live streaming is going

Live streaming expectations have changed. Basic “it plays” is no longer sufficient for many audiences and business scenarios. Several trends are shaping current implementations and service design.

Lower latency as a default

Audience expectations increasingly assume near-real-time playback. Delivery is moving toward lower and more stable latency without giving up scalability. In practice, predictability is the key: it keeps hosts, production teams, and viewers synchronized and reduces operational uncertainty.

Multi-path distribution as standard practice

Relying on a single distribution channel is increasingly treated as operational risk. Multi-output, alternate delivery paths, and the ability to run streams under your own domain or player are becoming baseline requirements for many deployments.

More automation with fewer manual controls

Large audiences mean a wide range of network conditions: from high-quality wired links to weak mobile connections. Delivery systems continue moving toward automatic adaptation of quality and simpler configuration surfaces, with operators focusing on the broadcast rather than constant tuning.

Growth of private and corporate streaming

Internal broadcasts, training, and corporate events prioritize control: access policies, domains, logging, and security posture. In these scenarios, the transport layer and delivery predictability are often more important than audience-facing platform features.

Resilient ingest remains critical

Field production, remote locations, temporary uplinks, and mobile networks are common in real deployments. Protocols and workflows that handle loss and jitter effectively remain a differentiator for operational stability.

Near-term direction is consistent: stable low-latency delivery, multi-output by default, and stronger control for the stream owner without dependence on a single platform.

FAQ

What is transport streaming in simple terms?

It is a service that receives a live stream and handles delivery to viewers. Many deployments also use it to distribute the same stream to multiple destinations.

Why separate transport from a video platform?

It reduces dependence on a single venue and its rules. It also enables running the stream on your own site or player, keeping alternate distribution paths, and managing delivery independently from platform features.

What is the difference between HLS and LL-HLS?

HLS is a standard delivery format designed for scalability. LL-HLS reduces latency while preserving HLS-style scaling characteristics.

When is SRT useful?

SRT is often used when the uplink is unstable (for example, on-location mobile internet) and the workflow needs better resilience to packet loss and jitter for ingest into the cloud.

Can one stream feed multiple channels?

Yes. A common setup is a single ingest input combined with multiple outputs for distribution to different destinations.

Serverspace as an example of transport-oriented live streaming

Serverspace Live Streaming is designed as live video transport: a single ingest stream can be delivered through CDN-backed playback and distributed via multi-output when needed. The service can be used as a managed transport layer, and it can also be paired with dedicated cloud infrastructure when a project requires a specific server configuration for streaming workloads.

Note: streaming requirements vary by codec, resolution, retention, distribution geography, and access controls. Capacity planning and protocol choices should be validated against real measurements and the operational constraints of the broadcast.